Hook

Training completion is easy to report.

Training effectiveness is harder. That is the part pharma teams actually get judged on when a procedure changes and people still have to do the work correctly.

Real-world scenario

A Standard Operating Procedure (SOP) gets revised after a deviation or change control.

The team sends out the training. People click through it. The dashboard turns green. Everyone moves on.

A week later, somebody is still following the old habit on the floor. Maybe the form was completed. Maybe the signature is there. But the new procedure has not really landed where it matters.

That is the problem. Completion tells you the training happened. It does not tell you whether the behavior changed.

What actually changes

| Area | Completion-only setup | Effectiveness-driven eQMS |

|---|---|---|

| Trigger | Training is issued because someone said it should be | Training is linked to the document change, CAPA, or audit finding that caused it |

| Evidence | A checkbox, a signature, maybe a certificate | Assignment, materials, assessment attempts, completion, and review history |

| Competence | Assumed because the module was finished | Shown through assessment, observation, or defined effectiveness evidence |

| Retraining | Added later when someone notices a gap | Assigned directly from change control or CAPA and tracked through closure |

| Inspection support | Completion lists and spreadsheets | Training history tied to the reason the training was needed |

| Control | Looks tidy on paper, but gaps show up in practice | Training stays attached to the quality event that triggered it |

Callout: what inspectors actually care about

The real question is not whether people finished the module.

The question is whether the new procedure is being used by the same people who signed the record.

If the answer is no, the training record is only half the story.

What a weak training setup leaves behind

Callout: paper compliance is not real compliance A training system that only tracks completion gives you the illusion of control.

That usually turns into:

- green reports while the floor is still using the wrong SOP

- retraining that gets missed after a change

- CAPA that closes before the behavior changes

- competence gaps that show up only when somebody is already under pressure

- too much time spent explaining the record instead of defending the process

What changes for QA and operations

For QA, the difference is simple:

- you can tie training back to the change that caused it

- you can see who actually needs retraining

- you can show what was assigned, completed, and assessed

- you are not hunting through a separate spreadsheet to defend the record

For operations, it matters because:

- the team is not left guessing which version to follow

- retraining gets attached to the work, not left as a reminder

- supervisors can see who is current and who is not

- floor behavior is easier to line up with the written process

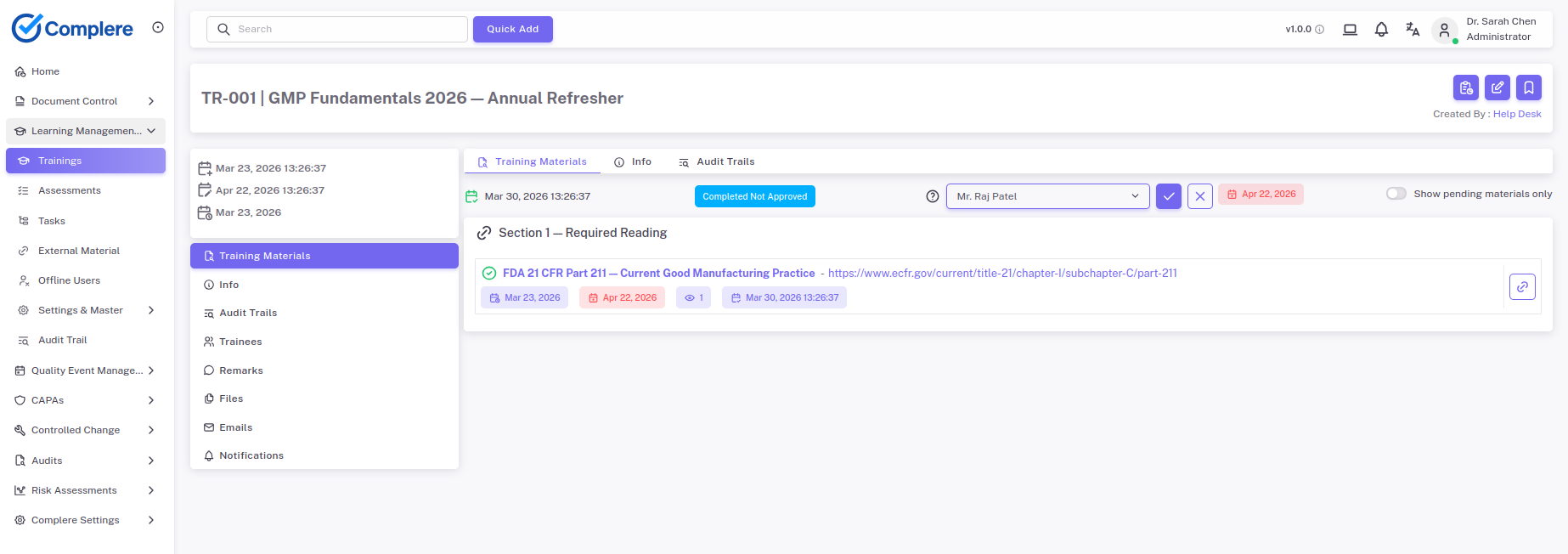

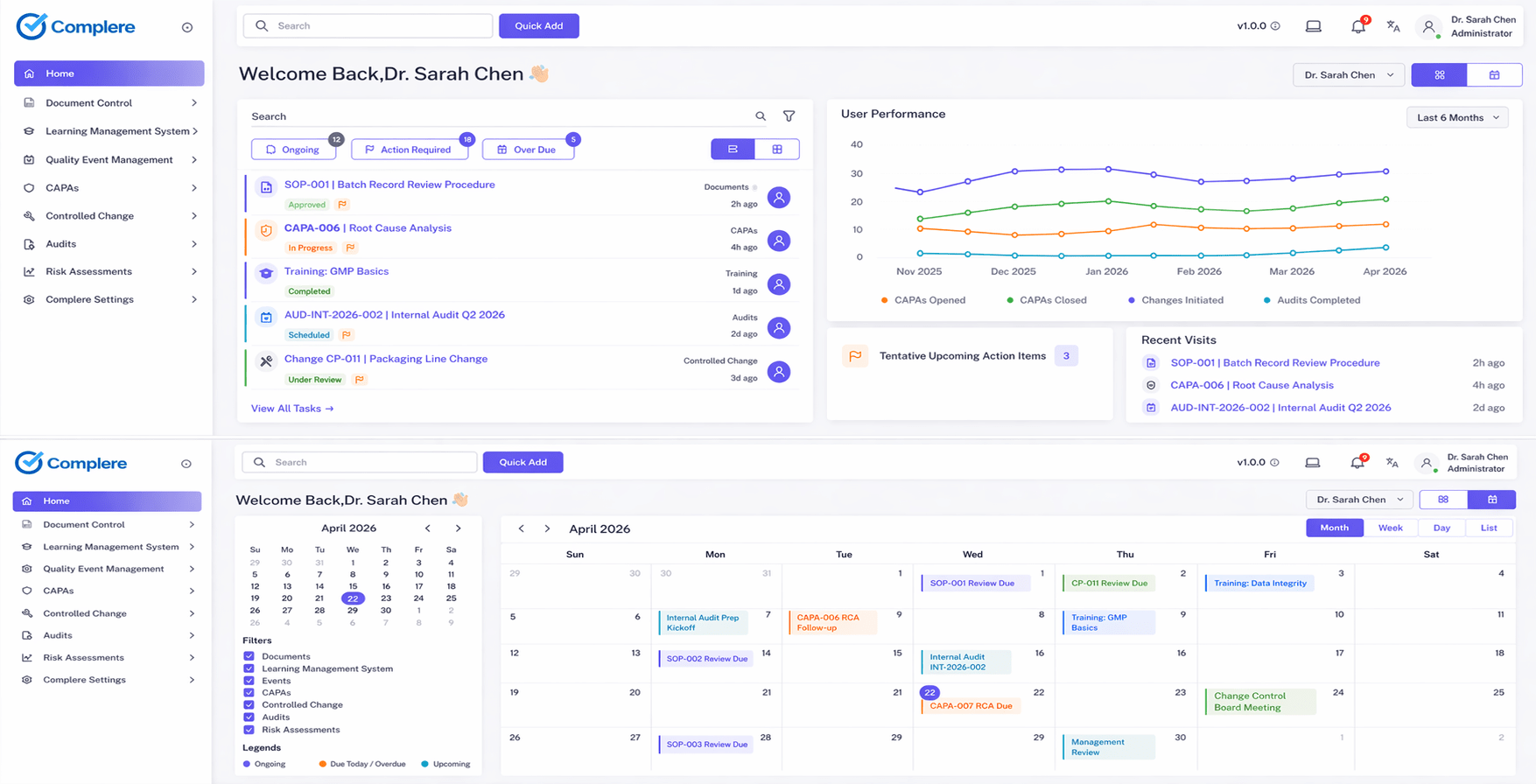

What Complere changes

Most LMS tools stop at a green tick. Complere keeps the reason, the training, the assessment, and the follow-up tied together.

That matters because training should not sit by itself. It should stay attached to the document change, CAPA, or audit finding that created the need for it in the first place.

- When a procedure changes, the training stays linked to that change instead of being tracked in a separate spreadsheet.

- When a gap is found, retraining and assessment history stay with the quality event that caused it.

- When someone asks why a person was trained, the system shows the reason, the record, and the follow-up in one place.

- When training happens outside the system, the evidence still gets captured instead of disappearing into a folder.

We did not build this to give your team more administrative busywork. We built it to keep the training record attached to the real quality event, so the chain does not break when the work moves.

Download template

If you are checking whether your current training process is good enough, use a short effectiveness checklist.

The checklist should ask:

- Was the training triggered by a real change, CAPA, or finding?

- Did the team assess competence, or only completion?

- Can we show who saw the updated procedure?

- Can we trace retraining back to the reason it was needed?

- Would an auditor be satisfied with a completion list alone?

That is the difference between a training record and a training system.

Closing thought

In pharma, training is not finished when the last signature goes in.

It is finished when the changed process is actually being used the right way.

If you cannot show that, you do not have training effectiveness. You just have completion.

Disclaimer

This article is a practical interpretation of regulated training and competence expectations and is not legal advice. Teams should assess their own workflows, training design, and intended use before changing their process.