Most pharma teams do not get CSA wrong because they misunderstand the acronym. They get it wrong because they keep trying to make it behave like old CSV.

That is where the trouble starts.

The system is live. The work is moving. Someone needs evidence that the software can be trusted in use. But if the validation model still depends on long step-by-step scripts for everything, the team ends up proving the process on paper instead of proving the system in practice.

The real-world scenario

Picture a team preparing a regulated software rollout.

Under the older CSV mindset, the first instinct is to build a thick script pack, trace every step, sign every page, and hope the file is strong enough to satisfy a future reviewer. It feels safe because it looks complete.

Then the release cadence speeds up.

The script pack gets heavier. The change log gets longer. The same tests get rewritten for every update. And when an inspector or QA reviewer asks what actually changed and why the system is still trustworthy, the answer has to be reconstructed from documents instead of being visible in the way the software is maintained.

And you are usually expected to explain that confidence immediately, not reconstruct it from documents.

That is usually when the team realizes the paperwork is not telling the real story.

That is the shift CSA is trying to solve.

What teams usually misunderstand

CSA is not validation with less discipline.

CSV is not gone either.

The change is in how assurance is built:

- What matters most is the intended use of the software.

- The evidence should be proportionate to the risk.

- Automation can carry more of the burden when it gives stronger, repeatable confidence.

- The paperwork should support the evidence, not become the evidence.

If a team treats CSA like a shortcut, it misses the point. If it treats CSV like the only serious way to validate, it misses the opportunity.

Callout: CSV proves the system was validated. CSA proves the system can be trusted in use.

Why the old model gets heavy

CSV-heavy work tends to grow quietly.

- More manual scripts

- More repeated sign-offs

- More review time for evidence that should already be obvious

- More delay every time a release changes one small thing

That does not always mean the validation is wrong. It just means the model is carrying more weight than it needs to.

In practice, the team starts spending time proving documentation quality instead of focusing on software trust.

Where it breaks in practice

| Area | CSV-heavy mindset | CSA-first mindset |

|---|---|---|

| Evidence | Manual scripts and long execution narratives | Risk-based evidence, often automated |

| Release cadence | Validation work repeated for every change | Evidence reused and refreshed as needed |

| Inspection response | Files and screenshots assembled at the end | Evidence chain stays current through the lifecycle |

| Team effort | QA spends time reconciling paperwork | QA spends time reviewing risk and intended use |

| Outcome | Validation feels heavy and slow | Assurance feels lighter but still defensible |

The real difference is not that one side cares and the other does not. The difference is whether the system makes trust easier to maintain.

Regulatory basis

FDA’s CSA guidance, 21 CFR Part 11, EU GMP Annex 11, and GAMP 5 all point toward the same idea: software used in regulated work should be assessed in a way that matches its risk, intended use, and impact on the quality system.

That matters because inspection questions are rarely academic. They are practical.

What was the intended use? What evidence shows the system can be trusted? What changed after the last release? How do you know the current version still behaves the way the team depends on it to behave?

Those questions do not reward bloated documentation. They reward a system that can keep its evidence current.

What a better system changes

When software assurance is done well, a few things become easier:

- High-risk controls get stronger attention.

- Lower-risk work does not get buried under the same level of ceremony.

- Evidence stays tied to the real release and the real intended use.

- Validation stops feeling like a separate event and starts feeling like part of the lifecycle.

That is the practical promise of CSA. Not less rigor. Better focus.

Download template

Use this checklist when comparing CSV-heavy validation with a CSA-first model:

- Does the validation effort match the actual risk of the workflow?

- Can the team show why a feature matters without rebuilding the whole evidence chain?

- Are updates handled in a way that keeps validation current?

- Would an inspector understand the trust story quickly, without digging through a document pile?

If the answer is no, the team may still be doing CSV work, even if the system is already behaving like a CSA-first platform.

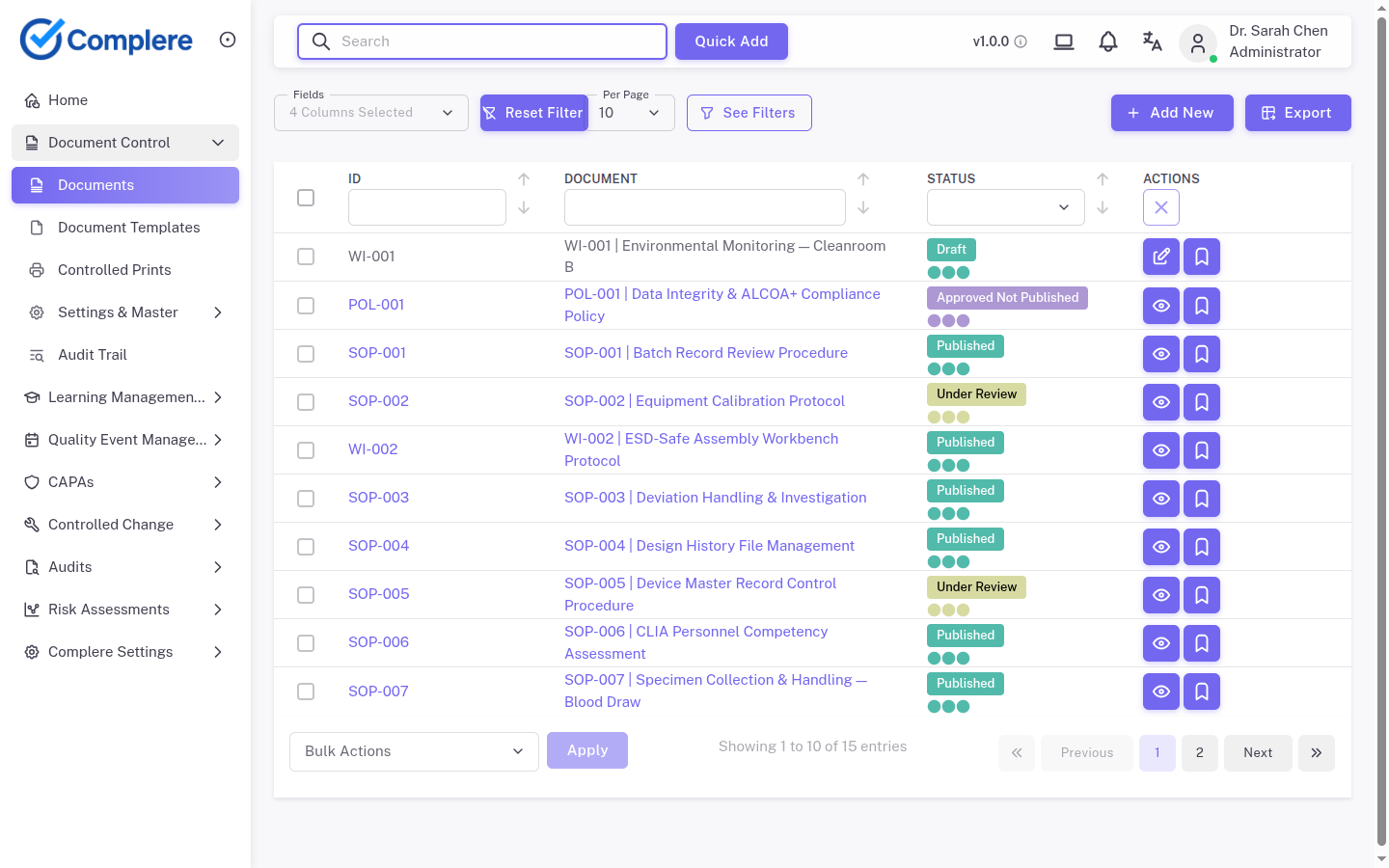

Complere fit

Complere is built for a validation model where evidence stays current instead of getting rebuilt every time the system changes.

- The validation strategy is CSA-first, with automated CI/CD evidence as the primary objective evidence.

- Lean TSR and VSR artefacts keep the trust story readable without turning it into a paperwork exercise.

- CSV-style outputs can still be generated on demand when a customer or inspector needs them.

- The traceability chain stays single and consistent instead of splitting into two different validation stories.

That matters because pharma teams do not just need a system that can be validated once. They need one that stays supportable as it evolves.

This is where most systems start to struggle.

Closing thought

CSA does not replace discipline. It changes where the discipline goes.

Instead of asking teams to prove everything the hard way every time, it asks them to prove the right things in a way that matches risk, intended use, and real-world use.

Sources

- FDA guidance on Computer Software Assurance for Production and Quality System Software

- FDA guidance on Part 11 electronic records and signatures

- EU GMP Annex 11

- GAMP 5 (2nd Edition)